Ethical Carcinization

Your morality either looks an awful lot like virtue ethics or it’s helplessly stupid

Before we get started, I am writing this article primarily for two reasons:

For whatever reason, Substack has a shortage of virtue ethicists. This is evidenced by how well my notes do that make very simple critiques of Utilitarianism, a moral philosophy which has no shortage of diligent adherents here.

Joseph Rahi basically told me to, and the honorable thing for me to do is to oblige1.

But be warned: I am not a philosopher by training. In fact, my deep-rooted philosophy of pragmatism precludes me from putting in the 10k hours of reading required to read everything written about moral philosophies I disagree with foundationally, even if it would make me 4% better at defending my intuitions against those of the people who support them.

What do crabs have in common with Virtue Ethics?

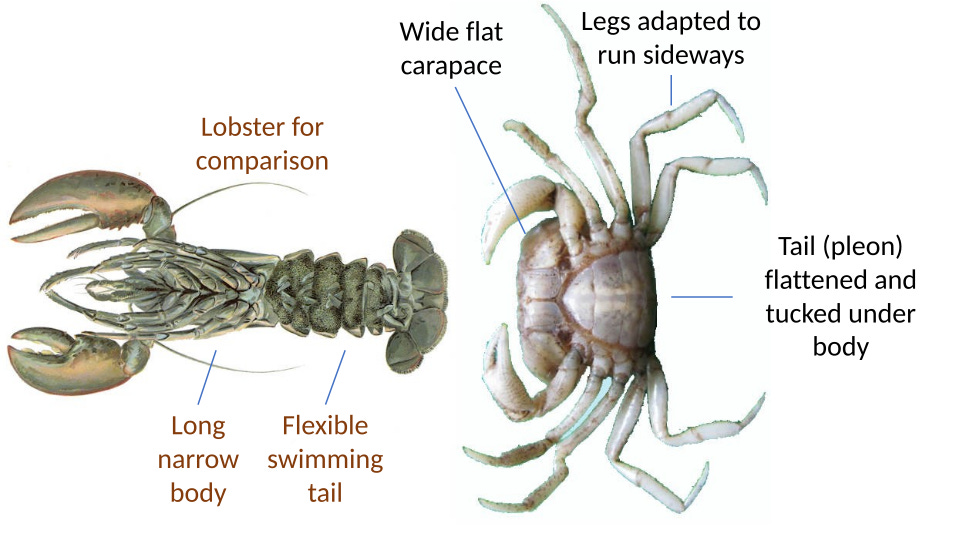

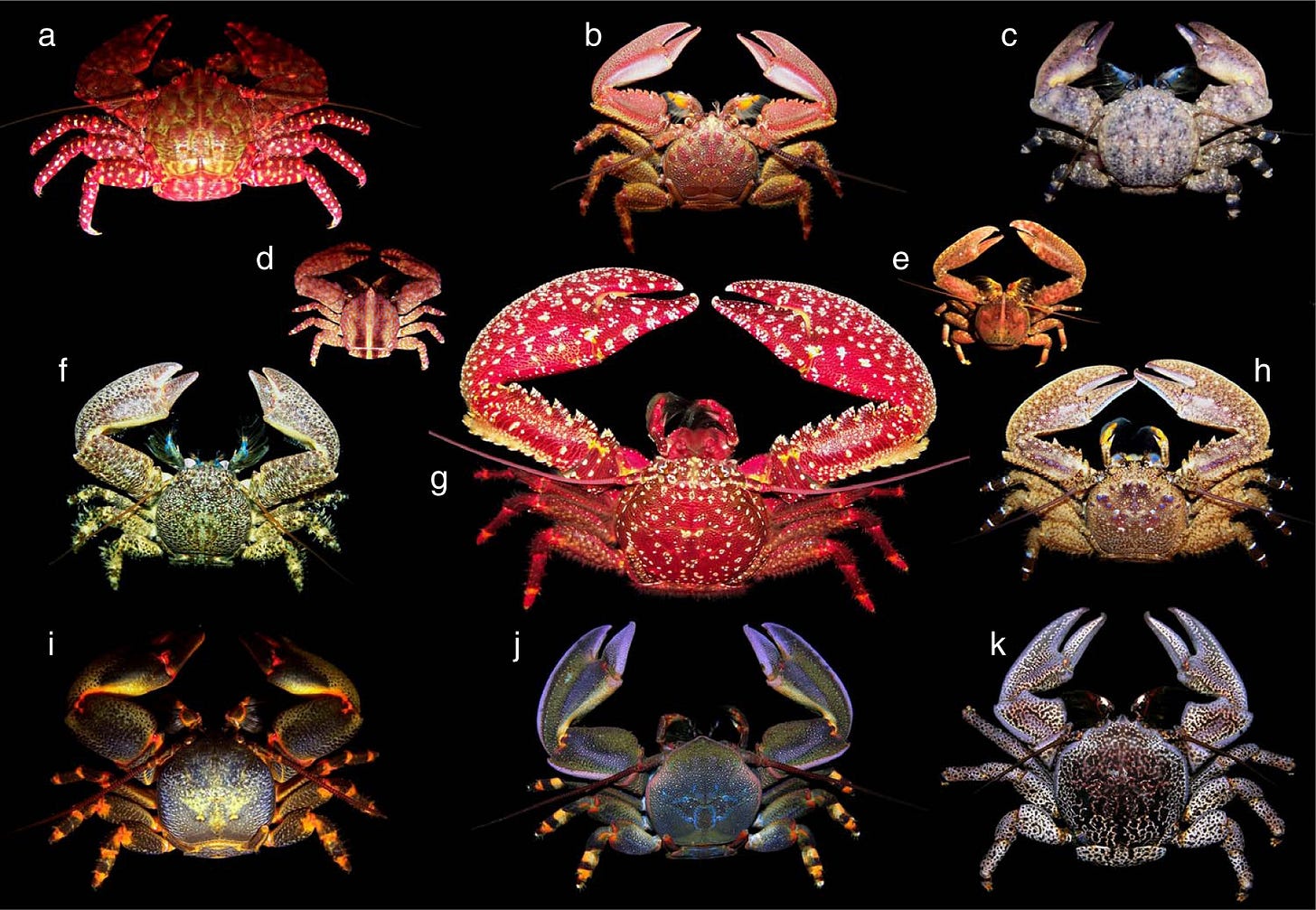

Carcinization is the evolutionary process of becoming crab-like. While there is only one true crab, its form is so well-adapted to a broad range of environments that no fewer than five times animals have slowly reorganized their cells to mimic them; to become crabs and so to survive in whatever hostile climate they find themselves.

There are three major moral philosophies: Virtue Ethics, Utilitarianism, and Deontology. Virtue Ethics is the oldest of the three and tells people to cultivate virtue by seeking practical wisdom (or, phronesis) and creating virtuous people. Utilitarianism, popularized by Jeremy Bentham in the 1800s and now by his bulldog today, begins just as simply: make moral decisions to do the most good for the most people. And while it starts out simple enough, it presupposes that people know and agree upon what “good” means (more on this later). Deontology, developed by Immanuel Kant, is perhaps the most foreign of the three. It tells you: “act according only to that maxim whereby you can at the same time will that it become a universal law.” This is an ethics-as-duty view with no room for reason. Also, it presupposes that people know and agree upon, at least initially, what “good” means (more on this later).

Utilitarianism and Deontology in their simple forms have glaringly obvious blindspots. And like the king crab and the coconut crab, they solve these blindspots by becoming more crab-like—by mimicking Virtue Ethics and its incredibly adaptive framework.

Utilitarians build a tower from the top down

I have not kept a secret my distaste for what I will call capital-U Utilitarianism. When I mock Utilitarianism, I mock the totalizing application of what should be useful calculations as a moral world order. The specific way I have shown my dislike, i.e., through Substack notes, has been liked by enough (virtuous) people that any Utilitarian worth their salt would have to concede that the notes themselves are a net good.

In its simplest form, Utilitarianism asks the question “what action, if taken, will lead to the greatest good?” This is a consequentialist approach to ethics, to judge an action’s morality by its impact in the world. It’s known as Act Utilitarianism, and it has a number of glaringly obvious problems, occurring both at the time of decision, and, ironically, when the ultimate consequences of those actions unfold.

There’s the classic problem of the repugnant conclusion. Where if total net utility is the end goal, wouldn’t 10 quadrillion people (Utilitarians love huge numbers) with lives barely worth living be better than what we have today? There’s the problem raised by Ursula K. Le Guin in The Ones Who Walk Away from Omelas, wherein a “utopia” is made for everyone else by causing unjust suffering on one very unlucky child2. There’s the Utility Monster thought experiment, where if maximizing utility is the end goal, if someone creates a machine capable of experiencing more pleasure through consuming you than you experience pain, you are obligated to feed yourself to it.

There are so many horrible thought experiments generated by Utilitarianism that you may think it reflects poorly on their whole philosophical structure. That it was propped up with no grounding, no foundation at all—a glorious skyscraper whose contractors started by building the top level. It’s a beautiful floor, but unfortunately it comes cascading to the ground the second you look at it closely. You have to start from the bottom or the top will fall down on you.

So Act Utilitarians are left in the unenviable position of having to defend countless monstrous outcomes. To their slight credit some do, saying that the repugnant conclusion is good, that robots who can experience more pleasure than people should take priority over us and actually they should be allowed to vote.

Others, to their credit, try to make the philosophy more reasonable. They realize that optimizing every individual “Act” will lead to a world in desperate pursuit of a pathetic local maximum. To make the actual best world, we need to abstract, to think bigger. We need to create a set of rules that, if followed, lead to the greatest good. No longer is it okay to shoplift, even if it would make you happier than it would make the shopowner sad. A world where this behavior is accepted would be a worse world for everyone (great insight!). And thus Rule Utilitarianism is formed.

The problem for the Rule Utilitarian is that creating people who do the best they can as the Virtue Ethicist would recommend will always lead to better outcomes than focusing on the end goal itself. Put differently, by this sort of consequentialist logic, consequentialists should just be Virtue Ethicists, and they should encourage as many people as possible to be Virtue Ethicists too. Ah, no problem, we will call this “Indirect” Utilitarianism and so claim credit for recreating what looks an awful lot like a crab.

But these crabs are not the same. Behind every “rule” or “indirect” consequentialist there is someone who, if they are persuaded that the world we currently live in is but a local maximum of utility, would just as well sacrifice all that we are and everything we’ve built for a different world. If treating people unfairly is a hindrance, we should overcome our ethical shackles. If reason itself can make people unhappy, we should make a world that is less reasonable

Deontologists build a tower and then remove all the structural support

Having first mocked Utilitarianism in a successful attempt to bring about more utility for the world, I now find myself with this strange compulsion to tell the truth: I think Deontology is a deeply flawed moral system too. And since I am being truthful, I think the places Deontology fails are more obvious.

Deontology asks you to use your reason to make rules, then to stop reasoning altogether. If you’re born into a world where a few good people already came up with all the rules a century ago, even better. Now follow them uncritically. Assume that this is a tenable, enduring resolution to morality; better yet, don’t assume at all—don’t even think unless you are obligated to.

If a murderer comes to your door and asks if your friend is upstairs, you say “yes.” Or maybe you start acting squirrelly, stall a bit, you know, without raising suspicion. Try to remind them that not killing is a categorical imperative. But remember that you can never lie to the murderer. Because what kind of world would we live in if not even murderers could trust their victims to be honest? One day we lie to the murderers, the next we lie to rapists, the next (I presume) we are all masturbating in the streets while the city burns.

And don’t even think about coercing the murderer, either. Because coercion is wrong. It’s the practice of the dutiless, and therefore something we must allow them to do.

Deontology falls apart the second a single person is born who doesn’t adhere to it. This person, let’s call him Ricky Gervais, realizes that there is a huge benefit to taking advantage of people who will never retaliate.

They have built an ethical framework using reason and judgment, then forbid future adherents from using their own reason and judgment. They build a tower of moral reasoning, then remove the structural supports, hoping that it does not come tumbling to the ground.

But nevermind. The Deontologist, not to be deterred, looks at the rubble and decides to reconstruct it. This time, the duties will be more sophisticated. Maybe not all categorical imperatives are created equal. Maybe not all of them are strictly imperative after all. So late in his life Kant distinguishes between imperfect duties and perfect duties. You’re obligated not to lie, but maybe your obligation to not allow your friend to be murdered takes priority (wow, great insight!).

The most sophisticated forms of Deontology follow this process over and over: use your reasoning faculties to reorder the categorical imperative hierarchy until your ruleset appears more reasonable. In practice this looks like W. D. Ross listing many prima facie “duties3”: fidelity, reparation, gratitude, justice, beneficence, non-malevolence, and self-improvement, then saying that in any given situation one duty takes priority over the other. In Virtue Ethics, we call these traits “virtues”, and we create people with the good judgment to use them. This form of 300-IQ Deontology has come to the same conclusion, but calls them “duties” and builds into itself only a weak mechanism of self-propagation.

Sophisticated Deontology has taken on the crab-like form of virtue ethics, arriving there only because people were using the practical wisdom borrowed from virtue ethics itself. Then it tells you that that’s enough reasoning, no need to continue. Follow the rules now, as is your duty.

Virtue Ethics solves everyone’s problems because it is good and the only thing that works

Virtue Ethics was first recorded by Aristotle but was surely discovered long before him, thousands of times whenever someone sat down to think about what would make the world better.

The biggest problem people cite about Virtue Ethics is that it is circular. Do what a good person would do. But what would a good person do? Well, they’d do what a good person would, obviously. I see this supposed flaw as an obvious virtue. A good person does their best to figure out what a good person would do, and then acts. They use their reason to avoid falling into paralysis. If they make a mistake and come to the wrong conclusion, they admit that they were wrong and then do it all over again next time. There’s virtue in this sort of circularity.

The other alleged flaw is that two people, each in the pursuit of goodness, can disagree about the correct option. Again, this only makes Virtue Ethics more resilient. It is great that two people can disagree—now use your reasoning to debate. Utilitarianism does not allow for this sort of disagreement as the only way to disagree is about calculations. Deontologists do not allow for disagreement either—once all the maxims have been reasoned, reasoning itself is the surest way to shirk your duties. But Virtue Ethics centers disagreement and calls it good.

What makes Virtue Ethics so simple and useful is that it perpetuates itself in perhaps the best example possible of a “virtuous cycle”. Virtue begets virtue; reason begets reason; good people create good people who do it all over again in the next generation. This framework doesn’t claim to have all the answers to every question, saying instead that the best way to answer them is to try to become wiser.

Virtue Ethics is more flexible than Deontology, trusting good people to make good judgments. It is more human than Utilitarianism, recognizing that people will always be better at making good moral judgments than machines or calculators; that the second you stop believing this is true is the second you welcome any number of monstrous Utilitarian conclusions.

When people take simple Utilitarianism and make it less monstrous, or when they take Deontology and make it less monstrously rigid, they are carcinizing these ethical frameworks into the form of Virtue Ethics. Moreover, they are using the very faculties of reason beloved by Virtue Ethics. Practical wisdom—not calculation, not duty—practical wisdom. And so they are relying on Virtue Ethics—the great, original crab—to rebuild their moral frameworks in its image so that they have a chance of survival in the real world.

Evolutionists think organisms are beholden to their environmental pressures, but it’s just as likely that evolution is beholden to this law of crabs. It can’t stop making them. Twist the dials just a little and you’ll get eight legs, two pincers, and lateral movement.

We wonder what life would look like on other planets. Well, whatever else is going on there, it seems certain that there will be crabs. And just as not all crabs are created equally, not all carcinized moral structures are equally good.

Take their relation to human flourishing. Deontologists support human flourishing incidentally and as a matter of duty, their true god. Utilitarians support human flourishing because they believe flourishing is the highest good. Virtue Ethicists, though, support human flourishing because they believe humanity is the highest good, and that we can be even better still.

Virtue Ethics is the only moral framework that both values humans intrinsically and also works to make them become more valuable. It’s the only framework tested by fire in the real world and made stronger for it. Any other framework, when introduced to real-life complications, has their foundation shaken and is left with no choice but to converge toward it, or die.

JFS

Check out Joseph Rahi’s great essay on Virtue Ethics here, as well as a great follow-up by Both Sides Brigade.

For more on Omelas, I recommend you read Harjas Sandhu’s post here for a great summary (or read the whole book).

Read, “virtues”.

Obligatory XKCD: https://xkcd.com/2314/

I think you've done a good job presenting an interesting metaphor which shows how ethical systems converge. One issue I have run into with Virtue Ethics is that, at times, it does not provide falsifiable propositions. The failures of deontological systems are often as black and white as a nuns wardrobe; while virtue ethics failures exist in an ocean of reinterpritable Grey. I like asking the question: If virtuous people repeatedly end up miserable, exploited, or socially destroyed, does the theory collapse?